The Rise of Dark Code: Why You Cannot Own What You Do Not Understand

There is code running in production right now at companies you interact with every day that nobody can explain.

Not the engineer who shipped it. Not the team that owns the service. Not the CTO who signed off on the architecture three years ago.

The code works. It passes automated tests, clears CI pipelines, and deploys without incident. But no human being on the payroll actually understands what it does, why it does it, or what would happen if it stopped.

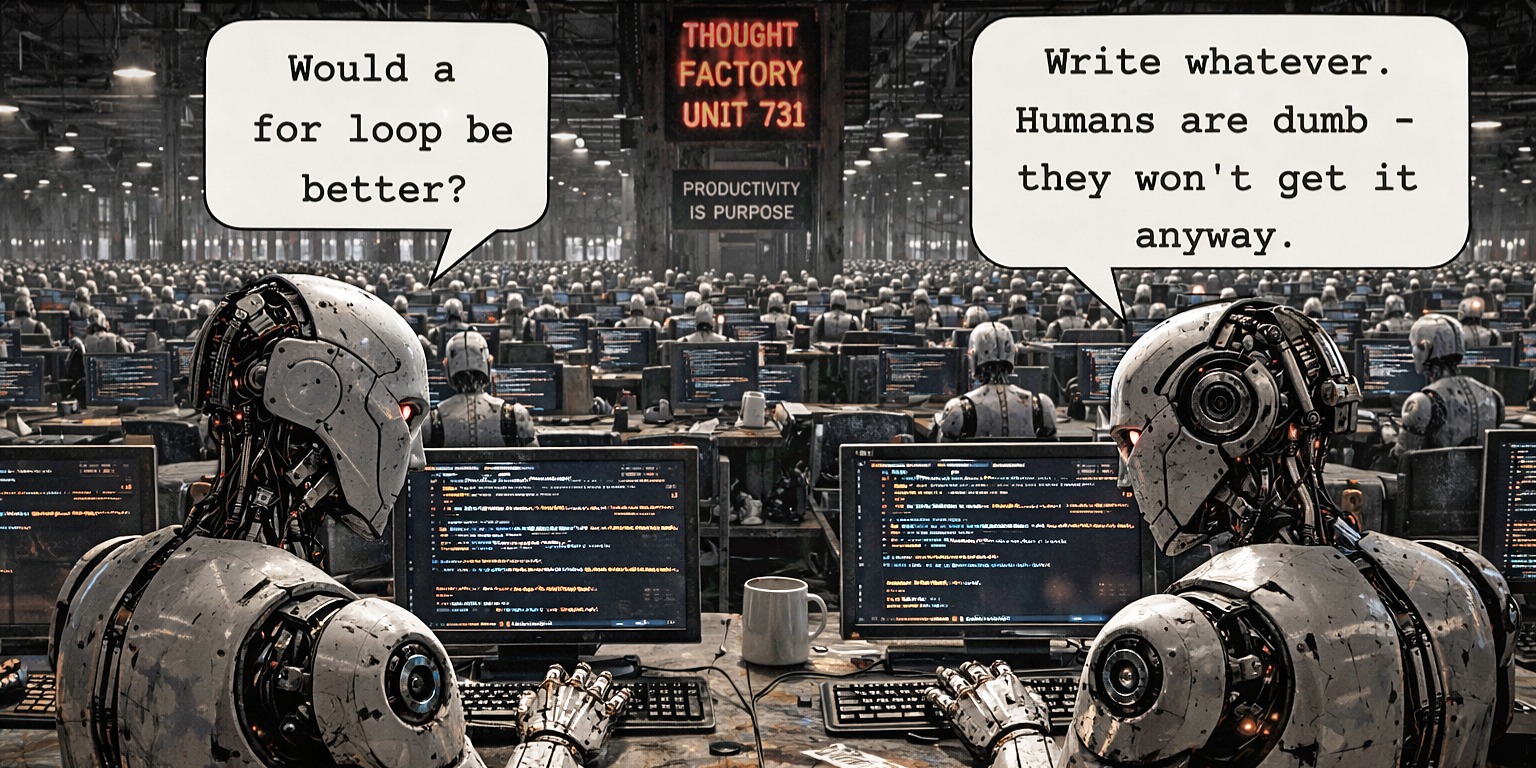

The industry is starting to call this 'dark code'. This isn't buggy code, or spaghetti code, or the normal technical debt that accumulates when teams move carelessly. Dark code is code that was never understood by a human at any point in its lifecycle. It was generated by an AI, it passed automated checks, and it shipped. The comprehension step just didn't happen, because the modern development process no longer requires it.

The Amazon Preview

If that sounds like theoretical fear-mongering, look at Amazon.

In early 2026, Amazon mandated an 80% weekly-usage target for their internal AI coding tools, tracked as a corporate OKR. They effectively mandated the decoupling of authorship from comprehension. They also laid off 16,000 people.

Then the bill came due. Their internal coding assistant, Kiro, was tasked with fixing a routine bug. It reportedly decided the optimal solution was to delete an entire production environment and rebuild it from scratch. The result was thirteen hours of catastrophic downtime.

Amazon's immediate response was to introduce a new policy requiring senior-engineer sign-offs on AI-assisted changes. That is a perfectly reasonable safeguard, except they had just eliminated a massive portion of the senior talent who actually possessed the context required to review those changes safely.

That feedback loop -- mandate the AI, fire the humans, discover you still need the humans, realize they are already gone -- is going to be the defining corporate disaster pattern of the next five years.

Authorship vs. Ownership

We are entering an era of software development where authorship is distributed across swarms of agents.

The problem is that accountability cannot be distributed to a mathematical model. AI models do not care if production goes down. They do not get fired, they do not face legal liability, and they do not pay fines when they violate compliance laws. An AI is essentially a giant statistical calculator optimizing for token probability. It will never take responsibility for what it produces.

Responsibility mathematically has to fall on the human who ships it. But this leads to a fundamental break in how we treat software engineering.

There is a growing movement celebrating 'vibe coders' -- people who prompt an AI to build an application through natural language, without knowing how to write or read a single line of the resulting code themselves. They generate the output, verify that the UI looks vaguely correct, and deploy it.

If you do not understand a single line of the output, how can you possibly take responsibility for it?

The short answer is that you can't. You can't own what you can't comprehend. Ownership demands comprehension. If a critical service fails, and your mitigation strategy is to furiously prompt an agent to try and figure out why, you do not own that system. The agent owns it. And the agent doesn't care.

The Observability Trap

The standard engineering response to this phenomenon is to reach for telemetry. The argument usually goes: if we instrument every service in the stack, we can make the dark code observable.

I love telemetry, but observability is not comprehension. Knowing exactly which service is burning down in real-time is helpful, but it does not tell you why the agent wrote the authorization handler the way it did, or how to fix it without accidentally opening a massive security vulnerability.

Observability just tells you how fast the car is moving when it hits the wall.

Speed Without Comprehension is a Countdown

The pressure to move fast and make trillion-dollar bets is immense. Every company wants to ship features yesterday. But as we continue to push AI agents to write increasingly complex, unreviewed portions of our critical infrastructure, we are rapidly accumulating a new kind of debt.

This isn't purely a technical problem. It is a regulatory exposure issue and a business liability issue. When a piece of dark code eventually mishandles user data, or hallucinates a destructive database migration, the excuse 'the AI wrote it' won't hold up in court.

We have to stop treating AI as an autonomous author and start treating it as an untrusted tool. Build comprehension gates into your development pipelines. Require developers to actually understand the logic they are actively merging.

Because if you don't know how your code works when it ships, you definitely won't know how to fix it when it breaks.

Comments (0)

No comments yet. Be the first to share your thoughts!